People often need to frame things as a battle between two forces (“libs” vs. “conservatives,” or “SJWs” vs. “anti-SJWs”). Any concerns or opinions mentioned predominantly by one side will get automatically shot down by the other.

I’m seeing these kind of knee-jerk responses in conversations about algorithms trained to make predictions about individuals. Depending on where the algorithm is used, these predictions can affect anything from the health care you receive to whether you’re hired for a job.

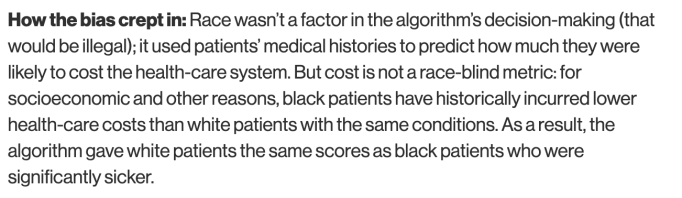

One example is a medical algorithm that was significantly more likely to recommend special health care programs for white patients than black patients who were equally sick. The factor that shaped the decision-making in this case wasn’t even race, at least not directly. From a recent MIT Technology Review article on this issue:

One of the remarks I regularly hear (and read) about this topic is that these algorithms are upsetting people because they reflect “facts not feelings” and that “facts don’t lie.” Ok, maybe facts don’t lie, but what do they actually reflect? What datasets are you training these algorithms on, and what do the data really tell you about people? (Not just groups of people, but individuals who are on the receiving end of these predictions.) The fact that members of one group may have historically been more likely to receive worse health care, on average, than members of another group doesn’t mean we need to perpetuate the problem.

The biases produced by these algorithms – biases which may be based on class, income, race, sex, or other dimensions – don’t necessarily reflect unchanging truths about human nature or social problems we can never address. So it’s disheartening to see people crow about how decisions based on algorithms are reflecting the “real truth” underneath the layers of PC-ness we’re festooned with as a society.

The medical algorithm mentioned in this post was examined, and the problem got addressed. In many other cases, we don’t know why algorithms are making predictions or decisions in certain ways. We don’t know what data they’ve been trained on, and companies are keeping quiet about it. There may be little accountability or option to appeal a decision. This is a critical issue to discuss, while hopefully minimizing the knee-jerk responses and the thought-terminating clichés (chants of “facts not feelings” from people who are also acting emotionally about this issue, though they don’t recognize their satisfaction or delight as feelings).